Research Discovery – Technology Q&A

7th November 2023

Simon Gregory | CTO & Co-Founder at Limeglass | simon@limeglass.com

Hamish Risk | Head of Research Discovery at Substantive Research | hamish@substantiveresearch.com

The Premise

Hamish: It’s nice to sit down to discuss this complex topic with you, Simon. First of all, a provocative question: as both our companies specialise in Research Discovery, is it really that difficult? Hasn’t it been solved already?

Simon: Well yes! We have both solved it in our different ways and we think that a broader adoption of our solutions will be game-changing for the industry. But I think it will be informative for people to learn how difficult it is.

“If people are thinking of building their own discovery technology, they might want to think again.”

It might also be surprising how little either of our firms are using Large Language Models so far, and how much work you would have to do before even considering taking that approach.

The Challenge

Hamish: Ok, so why is it difficult then?

“You can’t use generalist tools on a specialist product like Investment Research.”

Simon: Investment Research in itself is a unique challenge because of its fascinating range of unique properties. People attempting to use generalist tools for investment research tend to gloss over these unique properties. Exciting new technologies such as Large Language Models and Vector Search have huge strengths (as do longer-standing tools like Lucene Search), but when you attempt to use them for Research Discovery their weaknesses are always exposed somewhere.

The recent developments in LLMs are truly remarkable and will have impacts in many areas across the Financial industry, but perhaps not in the way people are currently envisaging. Our colleague Terry Sinclair put it nicely recently: “The impact that AI will have is hard to exaggerate, but easy to get wrong.” For example, I can see LLMs being a great tool for you at Substantive Research. I’m sure that automatically generating summaries of content for you to review is a great benefit. You are an expert in this area, so you know that you need to check the summaries for hallucinations, and you are well placed to spot and correct them if they occur. I’m sure you would agree, though, that unleashing this sort of summarisation without human oversight directly to the consumer would be very dangerous.

“Summarising is just one small part of Research Discovery, of course. Search is another very important one.”

For search, I’ve heard many people say that Vector Search is the solution. But the reality of that technology, and the unique difficulties with Investment Research, makes that highly problematic.

Investment Research is Unique

Hamish: Ok before going deeper into search, what are the unique challenges with Investment Research as a product?

Simon: There are many: Sheer volume of research produced and disseminated; Time-sensitivity of content as financial events unfold in real time; the fact that it is heavily regulated (especially Equity Research); Heterogeneity of the content, ie. everything from difficult document formats (PDFs are a nightmare!), a wide range of highly specialised subject matters (with their own inconsistently overlapping jargon) mixed in with both technical financial language AND idiomatic language (slang, jokes, song lyrics).

Some of those things are the esoteric challenges with Investment Research. But there are also some areas of hidden value which are ignored by most technological approaches.

One is that most text processing systems (including LLMs and Vector search) only take plain, unformatted text as an input. Turning heavily formatted and structured text from a research document into plain text is a big challenge in itself. But at Limeglass, we have actually invested significant R&D into extracting the spatial cues like formatting and document structure from the original PDF or HTML.

“Correctly interpreting title and subtitle structures, bullets/lists and inline formatting can tell you a lot more about the author’s intentions than if you just scrape out the plain text.”

And there is another, deeper issue with the content itself. Investment Research tends to be at its most valuable in times of change, particularly around Black Swan events.

“Handling content dealing with changing times cannot be left to systems which rely on rigid models that are difficult to update in a predictable manner.“

For example, GPT4 was only recently trained on data beyond January 2022. Since that time there has been a war in Ukraine, “Lower for Longer” has been replaced by fears of stagflation and recession, contagion is a term more associated with financial market stress rather than the pandemic, and Elon Musk has bought Twitter and changed its name to X. In this industry it’s not long before important aspects of the model become outdated and render it useless for Research Discovery.

Importance of Knowledge Graphs

Hamish: How does Limeglass deal with black swan events and big changes?

Simon: This is where being a specialist service-provider to the Investment Research industry comes in.

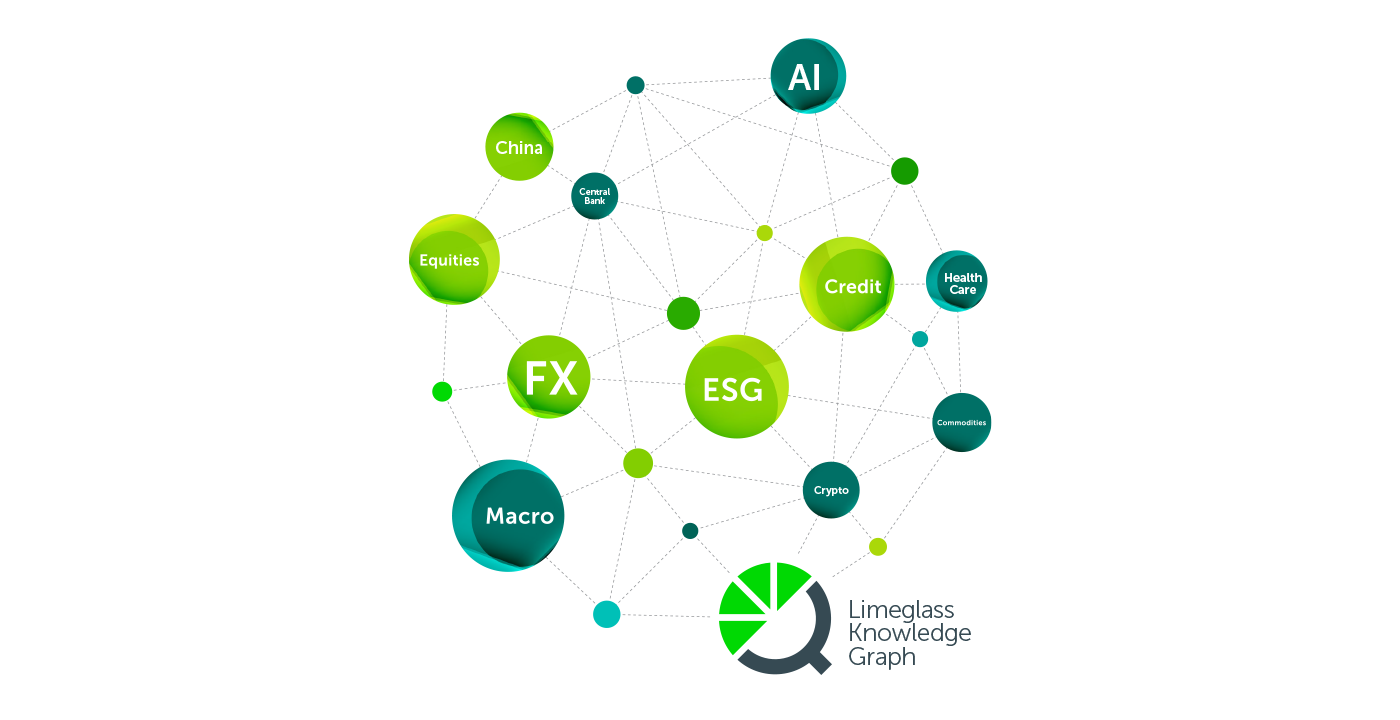

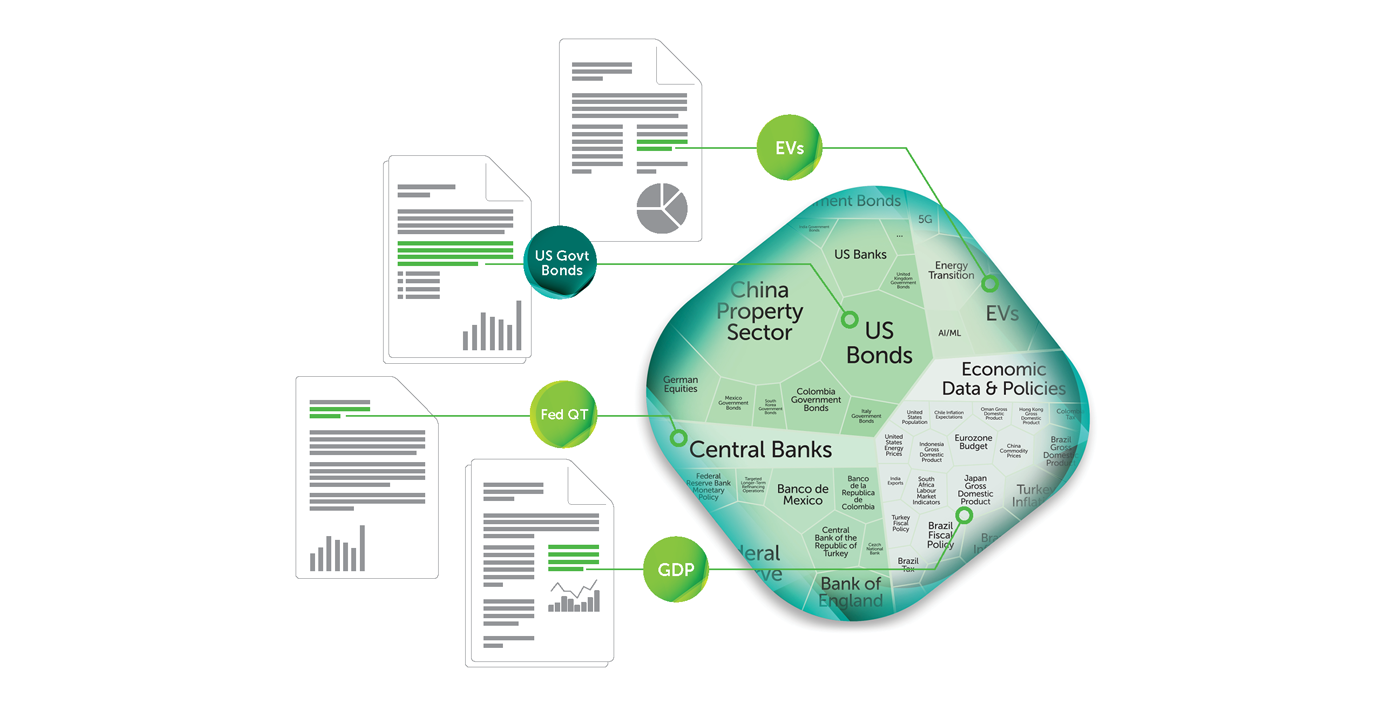

“Limeglass built a Knowledge Graph which has over 170,000 hierarchical and interconnected tags.”

The knowledge graph is effectively a codified and constantly updated map of financial market expertise which we use to tag the documents. This is where the Limeglass approach of bringing human domain expertise and AI together really comes to the fore. As the graph is being managed by a team, we can easily add “X” to the Twitter lexicon or reassign the probability that mentions of “contagion” are more likely to be related to financial market stress in 2023, whereas back in 2020-22, they were likely to be related to the COVID pandemic. It also means that a massive, complex concept like Inflation is not just about finding the word “inflation”. The knowledge graph connects all the associated concepts together in a deliberate, meaningful structure (eg. how do food prices relate to CPI, to headline inflation, and so on).

What about Embeddings?

Hamish: I think some people will wonder how what you have just described is different from vector embeddings. What are the differences?

Simon: Embeddings are essentially a set of numbers that represent text as coordinates on a huge multidimensional map. In this map, similar concepts are grouped together closely in various ways. They are used as inputs to machine learning models and also as the primary indexed unit in vector databases.

Some fundamental properties of embeddings are important to understand. Every single model (and subsequent evolutions of that model) defines a brand new multidimensional map. The dimensions and the numbers in the embeddings themselves are opaque.

“Embeddings have meaning only inside a specific model, which itself is a black box, whereas a knowledge graph deliberately defines reusable concepts and is intelligible to humans.”

So, if you want to add new concepts or adjust their relationships, that can only be done by retraining the model with more data – you can’t go and tweak one tiny part of it on its own.

Even the retraining process is not the simple exercise many people believe it to be. You need to feed the model with a lot of carefully curated information, paying particular attention to noise, bias and overfitting, and there is no guarantee that the result of retraining will be an improvement in the area you want, or won’t make something else worse.

Secondly, once the model has been retrained, the map has been changed. This means all previous embeddings are redundant and that your entire corpus of research needs to be reprocessed and re-indexed with new embeddings, all at the additional cost and computing power required.

Finally, in the example of the black swan event, where investment research is at its most valuable, such as the covid outbreak, or more recently the SVB & Credit Suisse collapses, there isn’t even any data that exists to train the model.

Search

Hamish: Research Discovery is obviously more than just search. But search is quite a high priority for research consumers to make sure they can find specific content they need. How are people currently approaching search and how, in your opinion, should they approach it?

Simon: There is no single sensible approach to search. You should be guided by what you want to get out of it. If you’ve got a very wide corpus of information and a wide audience who want to search absolutely anything, and you’re happy to provide them generic results, then using full-text or vector search as the core component makes sense.

However, if you have a relatively niche, nuanced and semi-structured domain where control, auditability, accuracy and relevance are more important, then having a knowledge graph at the centre of it makes more sense.

“The great thing is that knowledge graphs can be integrated to enhance existing search technologies, so the ultimate solution in this space looks like a combination of the two.”

Hamish: How would a combination of these technologies come together best for the Research industry? People are talking about “RAG” quite a lot. Is that what you mean?

RAG (Retrieval Augmented Generation) is a great example of this.

The most common example of RAG at the moment is Microsoft Bing Chat which allows users to search the web via a Chatbot. It uses traditional Bing search to find content related to the user’s query, then uses OpenAI’s GPT to generate an answer based on the content of the results in order to try to meet the user’s expectations.

“When it comes to searching for complex content, understanding the user’s intent is important.”

If you can, you want to meet the user’s expectations that when they ask a question, your system knows what they were trying to ask, rather than being too literal. Bing Chat is a great example of this working better than traditional search.

But for Investment Research, you need to be a little bit careful with this.

RAG is ultimately a product of the quality of the search result and identifying concise relevant information based on the ‘prompt’. There is a lot of active research into how to optimise this search / LLM interaction and at Limeglass we’ve actually already got a neat solution that works out of the box to provide LLMs with relevant, concise & clean information. We can also go one step further and we’re able to show the actual relevant source content alongside the result.

Because we have this head-start in this area we’ve moved on to thinking about other ideas where the power of LLMs can be utilised to help interpret the user’s intent and rank the relevant extracted original content without exposing the user to the risks of hallucinations and overly assertive incorrect answers.

Quant

Hamish: One thing that some of our readers might be confused about is how Research Discovery relates to existing large-scale analysis of text for investment purposes. Some people will be reading this saying “we’ve been doing Natural Language Processing (NLP) for years! What’s new?”

Simon: Yes, that’s a good point. Machine Learning has been around for a long time, and plenty of Quant funds have taken advantage of NLP tools to analyse things like the Fed minutes or company earnings calls for sentiment and basing trading strategies on this. I’m sure that the emergence of LLMs is already having an impact in this area as well. For this sort of use case, we are not saying you should replace any existing or emerging tools – there are clear benefits of using vector embeddings and black box algorithms to study this data en masse. But the unique way we process research is additive to that. It can provide new dimensions to content analysis, effectively becoming an additional alternative data source.

Do you have any examples of how people are approaching those Quant use cases?

Yes, one of the areas people are exploring is to try to understand if there are any meaningful patterns in the way that certain subjects are written about in relation to each other. We call this Topic Co-Occurrence and it is easy for us to make this available for Data Scientists. We can measure how much certain topics are written about in the context of others.

Now, crucially, this is different from simply charting the coverage of two themes against each other. This is something that traditional NLP tools are already good at doing. Our approach allows for a deep analysis of relationships in a different way. For example, we can examine the extent to which analysts are writing about recession risks in the context of central bank policy rates because we can see where those concepts co-occur in the same context, like in individual or adjacent paragraphs (or even in relation to spatial cues like titles and lists). If you see spikes or troughs in these sorts of relationships, that might have some informational value (especially when compared to other relationships, eg. looking at the slow death of “Lower for longer” as a theme)!

And is Topic Co-Occurrence, as you have described it, something you can do with LLMs and vector embeddings?

Yes potentially. But you would have to do it in a very targeted way. You would have to run an algorithm specifically targeting the topics you wanted to analyse. Our approach, using content that has already been tagged and where we have already measured the “coverage” of those tags in every paragraph, means that we automatically generate the data for ANY combination of topics – it just needs to be examined!

And this is a good segue to an important point about research discovery in general.

Classification

“Financial market participants love to classify things.”

I struggle to think of another industry where clearly defined classifications and relationships matter as much as they do in Financial Markets. There are organisations that exist with this as their primary offering. Think of GICS sector classifications. Some institutions dedicate substantial resources to define their own subject classifications to obtain a competitive edge.

“Should Nuclear Fission be classified as Clean Energy? There are split opinions on this that cannot be comfortably captured in an embedding.”

The categorisation of something like Nuclear Fission has ramifications for all sorts of things including equity valuations, or whether a company can be included in a particular ESG fund or index.

Defining categories deliberately is, therefore, very important for financial market participants.

In the Nuclear Fission example, if you left the classification entirely to an LLM, it would just come down to what was learned from the training data and the result could go either way, or even more unhelpfully, end up as some fuzzy combination of the two.

Super Users

Simon: I think it’s time we switched hats, Hamish. I am going to ask you a question! At Substantive Research, you are users of the Limeglass Portal which helps with your own research discovery process. Could you describe the “super user” role that you play in that process? It is obviously one thing for us all to talk about AI and all these amazing technologies, but it is another thing to learn from people who are using them to extract real value for their clients.

Hamish: Yes I suppose being the “super user” is our core proposition. We want to be the window to the wider research market for our time-poor portfolio manager clients. We can interpose ourselves in the process to guide them not just to relevant research, but to quality research, and even to help them select which research providers are the best fit for them.

“Our bespoke service requires us to consume large amounts of research in an efficient way.”

We can use a mixture of old fashioned methods (for example, we happen to know that certain analysts are always worth reading when they write about a particular topic) and new methods like using the Limeglass portal. Using those methods together to identify the most relevant research for our clients, our curation team can then check the robustness of the analysis, assess the general quality (which requires “experienced subjectivity”), and send on the curated content to the client.

Artificial & Organic Intelligence

Simon: And as you are super users, you are also well set to benefit, perhaps, from things like Large Language Model summaries, because you know the risks and how to mitigate them.

Hamish: Yes, I think that’s right. We would not use an LLM to do the curation for us. That would defeat the point of the highly bespoke approach we are taking to answering client questions. But we could certainly use LLM-generated summaries to help us decide which research to read in the first place. This would be a bit like the way you described Limeglass using LLMs at very limited stages of your search process.

“We can use the LLM to help us filter and synthesise, before applying our human oversight and sending something valuable to a client.”

So Simon, final question: is there anything else you’d like to add to this subject?

Simon: Loads! I could write whole books on each little area. We haven’t even touched on the complex computational geometry and statistics required to process PDFs and to make our Rich-NLP work effectively. We also haven’t talked much about the best approach to finding properly relevant content (as opposed to just passing mentions of something); or how to disambiguate when words or phrases mean different things in different contexts; we only briefly went over the heterogeneity of research content, but there is plenty more to discuss there; and of course, the regulation of investment research is a major barrier to treating it like any other piece of content – that is worth a whole session in itself.

Hamish: Well, luckily, we will have time for all of that soon as we will be hosting a breakfast session with select guests from the Buy Side and Sell Side in early December.

Simon: Absolutely. Looking forward to picking this up with you again over coffee and eggs!

News & Insights

Limeglass Ltd is a Fintech providing Research Discovery services to producers and consumers of Investment Research across the Buy Side and Sell Side. Limeglass combines the power of AI & human domain expertise to turn unstructured research documents into rich, structured data, unlocking a variety of use cases to improve the utilisation of content for consumers and the production ROI for producers.

Substantive Research offers independent comparison and discovery on investment research, ESG and market data pricing and products. Substantive’s Research Discovery tool is designed to be an investor’s window to the investment research market, matching them to providers’ that best suit their investment process requirements.

This article was co-authored by Hamish Risk at Substantive Research & Simon Gregory at Limeglass Ltd.