Limeglass is the piece you’re missing from your Investment Research AI strategy

Oliver Hunt | Chief Product Officer | oliver.hunt@limeglass.com

Anecdotally, there is a pretty clear message coming from investment bank technology teams at the moment: “there is no money for anything new, unless it’s AI.”

Those of us selling AI solutions to the banks, therefore, are pretty happy with this situation. There is a general understanding that what we do is of value, even during a belt-tightening, wait-and-see-what-the-Fed-does-next sort of environment.

That being said, we are also hearing how some of these well-funded AI projects have started running into difficulty because of a missing component…

…A component that we at Limeglass just so happen to provide!

A knowledge graph to connect data and standardise the client expertise

One of the main projects being undertaken by banks and independent research providers at the moment is to use advances in Large Language Model technology to enhance their document search capabilities.

Let’s face it – most existing search engines for research documents fall woefully short of most users’ expectations. Perhaps, therefore, it is no surprise that only a small percentage of content discovery happens on banks’ search engines.

Understandably, there is a lot of excitement about the prospects for vector search and RAG (Retrieval Augmented Generation) to change the paradigm, and early results appear to be very promising.

However, two issues arise with this LLM-only approach:

-

Categorisation: While individual searches for content seem to be getting good results, categorising those search results into a consistent framework is proving difficult.

-

User expectations: As search is such a small component of content discovery, there is not much point in upgrading search capabilities while other forms of discovery (distribution, portal display etc) languish in the dark ages.

Neither problem suggests that the wrong technology is being used – just that it needs to be accompanied and enhanced with another technology: knowledge graphs.

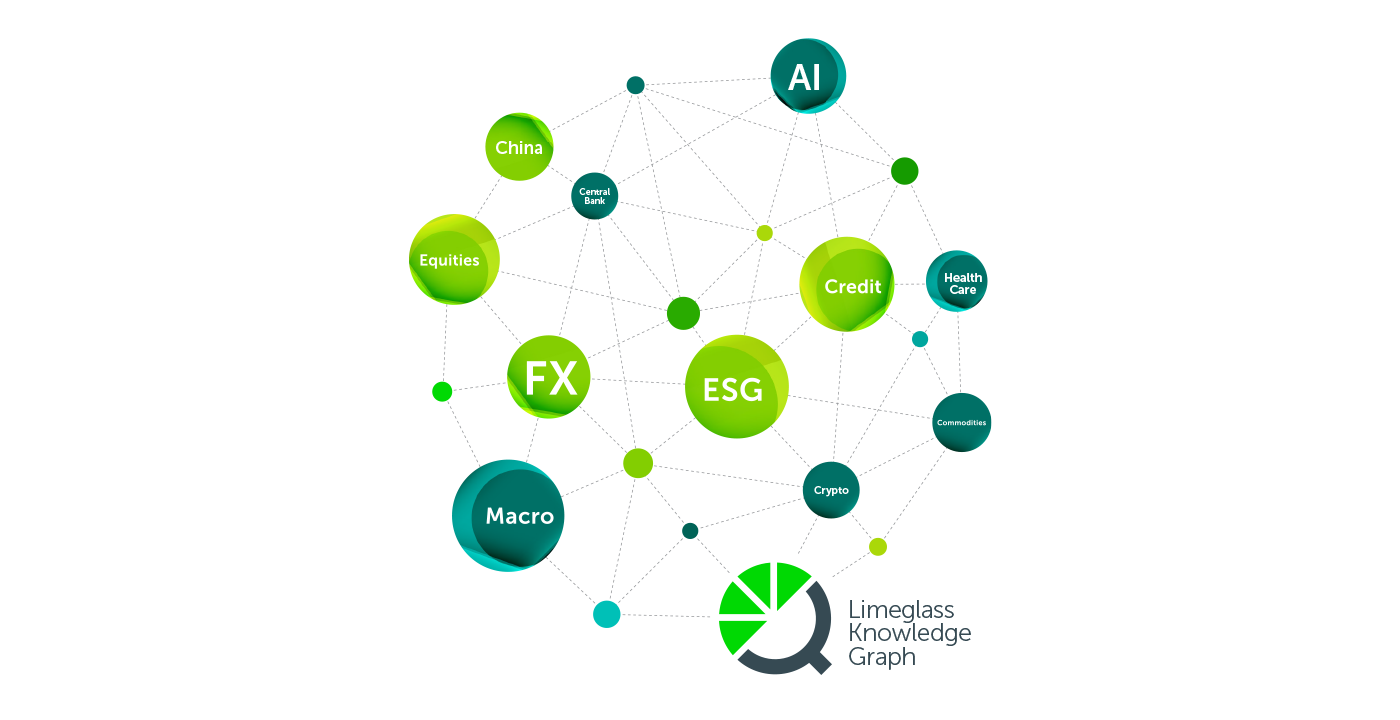

Limeglass has a proprietary AI content discovery system which is built on top of an industry-leading 170,000+ tag knowledge graph. This graph codifies and standardises rich content categories from across Equity, Macro, Economics, ESG, and Thematic research. Using this knowledge graph in conjunction with vector search (or even, in some cases, instead of vector search) has enormous potential for research providers to optimise their clients’ use of their content.

Imagine this scenario:

- An investor client asks a salesperson for the latest research looking into the effects of the upcoming US Presidential Elections on the US Economy.

- Traditionally, fulfilling this request accurately would have been a difficult task without reading ALL the research, but the salesperson uses a snazzy new vector search tool to find useful extracts from various Economics and Strategy documents which can then be provided back to the client.

- Thrilled with this result, the client then asks the salesperson to send them any similar content as soon as it is published over the next several months.

- At this point, the salesperson has a choice:

- a) commit to running the search manually every day and compiling results for the client. This would become heavily burdensome, especially if other clients started asking for a similar service on different subjects.

- b) or use existing tagging systems and update the client’s distribution preferences. This, however, would likely disappoint the client. Based on existing tagging systems, the closest match they might get is “US Economics” or “US Elections” separately. The client would then be bombarded with countless irrelevant reports that did not deal with what they actually want – the two concepts together.

- How much better would it be, therefore, if the salesperson could rely on a tagging system that was already categorising content at the “US Elections + US Economics” level of granularity? With a Limeglass knowledge graph, this is entirely possible. The salesperson could sign up the happy client to receive any research automatically that covers that topic, based on the knowledge graph tags.

Of course, that is just one example. Using Limeglass’ knowledge graph in conjunction with vector search and RAG has the potential to solve all of your content discovery problems in one connected solution. The knowledge graph means that granular atoms of content can be treated as human-readable data and connected across distribution systems, portals, CRMs, authoring platforms, and much more.

In addition, at the more technical end of things, we have also found that existing strategies for “chunking” source documents in RAG workflows are struggling to efficiently provide concise / high-enough-quality inputs when used at scale, and when dealing with time sensitive content like investment research. This is another benefit of bringing Limeglass into your RAG solution, as the Limeglass Research Atomisation™ technology can optimise the LLM inputs using Atomic Query, and in addition provide valuable human-understandable context alongside the final result. Atomic Query efficiently hones into on the relevant parts of the documents using the power of the knowledge-graph and full contextual understanding of the document structure. We have a custom RAG tool that uses this on Bank of England documents and the results are streets ahead of any other RAG tool we have seen.

We will write more about this soon but please feel free to get in touch to book a demo or to learn more about expert Research Discovery with the professionals at Limeglass.